Look, if you’re building an AI product right now, the hardware you choose is just as critical as the model itself. The demand for compute is through the roof, and I’ve seen teams blow through thousands of dollars in a single weekend simply because they rented the wrong machine.

When it comes down to picking the Best GPU for AI Training or inference, most people just assume newer means better, or cheaper means more cost-effective. But in the real world? It’s not that simple. The battle of NVIDIA A100 vs H100, with the often-overlooked L40S thrown into the mix, comes down to a very specific math problem: cost-per-token versus time-to-market.

In this guide, we are going to look past the marketing brochures. We’ll break down real-world benchmarks, the hidden “legacy taxes,” and exactly which GPU to Rent for AI based on your specific workload.

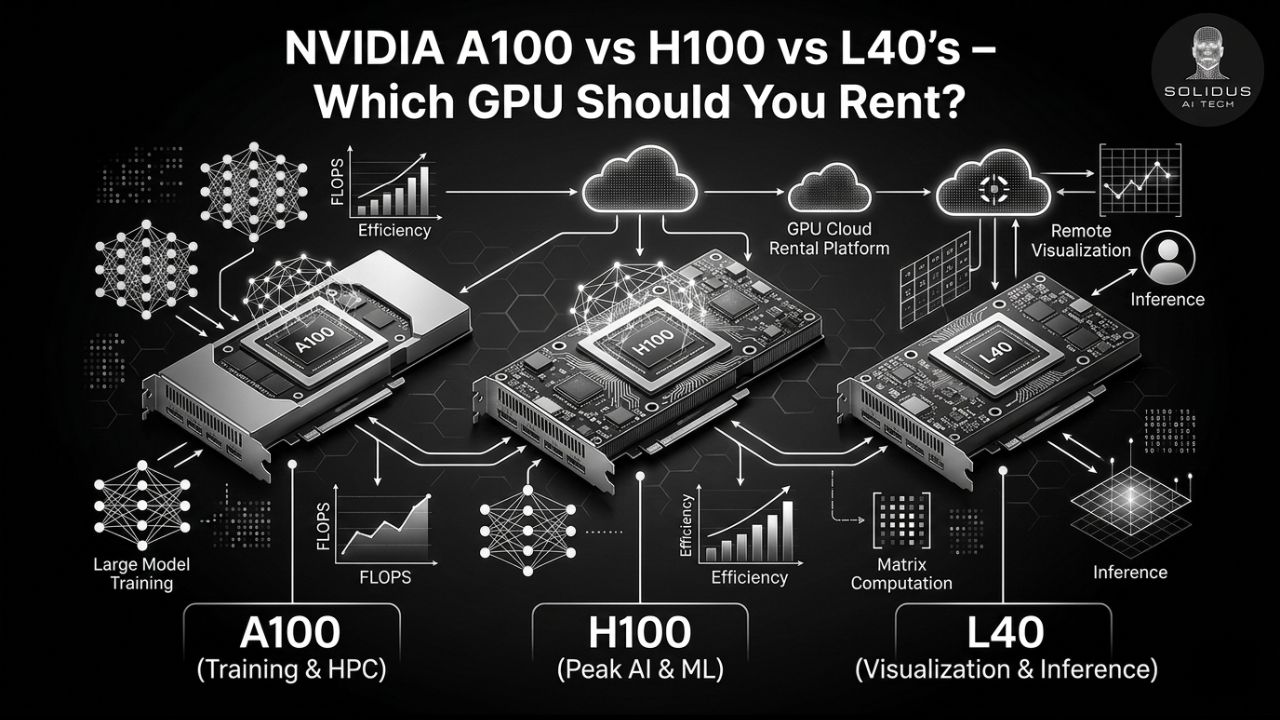

The Short Version: Specs at a Glance

Before we get into the weeds of benchmarking, let’s quickly look at what we are actually comparing. It helps to understand the physical differences before looking at the cost.

| Feature | NVIDIA H100 | NVIDIA A100 | NVIDIA L40S |

| Architecture | Hopper (5nm) | Ampere (7nm) | Ada Lovelace (5nm) |

| Memory | 80GB HBM3 | 80GB or 40GB HBM2e | 48GB GDDR6 |

| Bandwidth | Up to 3.35 TB/s | Up to 2 TB/s | 864 GB/s |

| FP32 Performance | 60 TFLOPS | 19.5 TFLOPS | 91.6 TFLOPS |

| Ideal For | Massive LLM Training | Legacy AI / General Compute | Inference, Vision, Rendering |

Read our full Guide on Scaling AI Cloud Infrastructure for a deeper dive into memory bandwidth.

What Everyone Misses: Real-World Cost Per Token

Most comparison blogs will just tell you that the H100 is the fastest and the L40S is the cheapest to rent per hour. I mean, sure. But hourly rental rates are a terrible metric for AI. What you actually care about is throughput per dollar.

Let’s look at some recent real-world macro-benchmarks (like a full BERT-base fine-tune).

When you normalize the cost to process 10 million tokens, the math completely flips:

- H100: ~$0.88 per 10M tokens (Training)

- L40S: ~$2.15 per 10M tokens (Training)

- A100: ~$6.32 per 10M tokens (Training)

Read that again. The A100 might cost less per hour to rent than the H100, but because the H100’s Hopper architecture (and 4th-gen tensor cores) trains models exponentially faster, the H100 is actually almost 86% cheaper per token for heavy training runs.

Let’s break down where each GPU actually shines.

NVIDIA H100: The Undisputed Best GPU for AI Training

If you are training Large Language Models (LLMs) with over 50 billion parameters, the H100 is simply the king. Built on the Hopper architecture, it features an insanely fast 3.35 TB/s memory bandwidth.

Why it wins:

- Raw Speed: In real-world tests, an H100 cluster can process roughly 12x the training tokens per second compared to an A100.

- FP8 Support: The H100 supports FP8 precision, which basically means it can push massive amounts of data through the pipeline with incredible efficiency.

- The Verdict: Yes, it commands a premium rental price (often $2.50 to $4.00+ per hour depending on the cloud provider), but the speed means your engineers aren’t sitting around waiting for epochs to finish. It is the Best GPU for AI Training if time-to-market is your primary metric.

NVIDIA L40S: The Hidden Gem for Inference and Vision

The L40S is the GPU everyone forgets about, but it’s arguably the smartest buy for a lot of startups right now. Built on the Ada Lovelace architecture, it doesn’t have the massive memory bandwidth of the H100 (it uses GDDR6 instead of HBM3), but it is insanely cost-effective.

Why it wins:

- Inference Economics: While the H100 wins on training cost, the L40S is arguably the cheapest GPU for inference. Because its hourly rental rate is significantly lower, serving chat responses or running RAG (Retrieval-Augmented Generation) pipelines is incredibly cheap.

- Visual Compute: If your AI product involves image generation (like Stable Diffusion), video processing, or 3D rendering, the L40S destroys the competition. It was specifically optimized for graphics-heavy workloads.

- The Verdict: Rent the L40S for bursty micro-services, visual AI applications, or serving models in production.

NVIDIA A100: Is the Enterprise Workhorse Dead?

Let’s talk about the NVIDIA A100 vs H100 debate. For the last few years, the A100 was the gold standard. Today? It’s starting to look like a legacy tax.

Why it’s struggling:

- Because cloud providers still charge decent money for the A100, its slow speed (compared to Hopper) makes it the most expensive option per token for both training and inference in many modern workloads.

- When you should still use it: Honestly, the only time you should actively seek out an A100 today is if you have legacy mixed workloads, or if you specifically need exact Ampere reproducibility for an older ML pipeline. Otherwise, you are better off upgrading to the H100 for speed or laterally moving to the L40S for cost savings.

Need help migrating legacy models? Check out our Cloud Migration Services for Machine Learning.

Framework: Which GPU to Rent for AI?

If you are still confused, here is a dead-simple, actionable framework to decide which GPU to rent for AI based on your immediate needs:

- If you are pre-training or fine-tuning massive LLMs: Rent the H100. The high hourly cost is entirely offset by how fast it finishes the job.

- If you are serving an AI chatbot or running a RAG app: Rent the L40S. It offers the lowest cost per inference-token and handles bursty chat traffic perfectly.

- If you are generating images, video, or 3D content: Rent the L40S. It was built for visual processing.

- If you have strict legacy code tied to the Ampere architecture: Rent the A100. (But start planning your migration).

Final Thoughts

Choosing the right GPU isn’t about just grabbing the biggest card your cloud budget allows. It’s about matching the silicon architecture to your specific workload. If you get it right, you can literally cut your cloud bill in half while doubling your throughput.

Stop guessing with your infrastructure budget. Are you ready to optimize your AI stack and stop overpaying for compute? Contact the Solidus AI Tech team today for a custom cloud architecture audit. We’ll help you provision the exact GPUs you need, no fluff, just performance.

FAQs

-

Is the L40S GPU better than the A100?

The L40S GPU is better than the A100 GPU when it comes to generating images, and this is especially true for images of different sizes and resolutions.

-

Which GPU is better, A100 or H100?

NVIDIA estimates the H100 delivers roughly 6× more compute performance than the A100.

-

What is the difference between L40 and H100?

The H100 GPU is a high-end GPU that is designed for AI and machine learning workloads. It has more CUDA cores, more memory, and higher bandwidth than the L40 GPU.