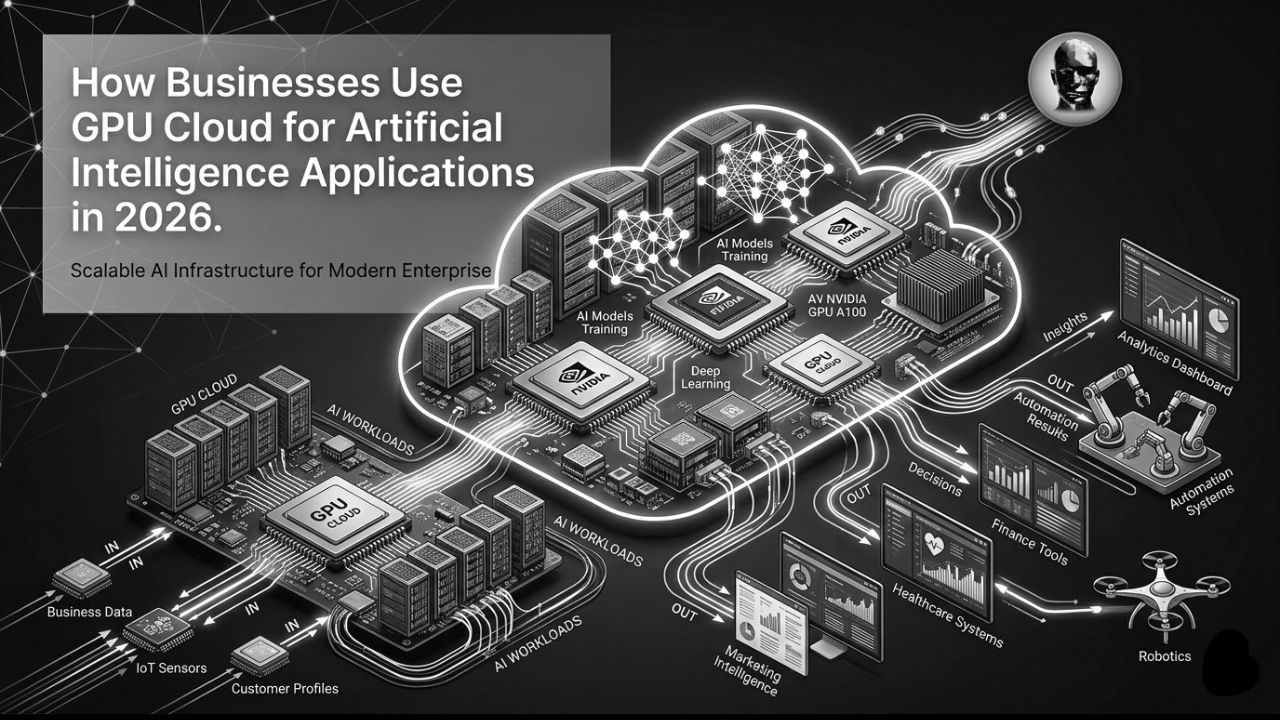

If your company is building artificial intelligence, you already know the main hurdle isn’t a lack of data or ideas, but rather it is computing power. From training large language models like LLM to running real-time predictive analytics demands bigger and better processing capabilities.

A few years back business bought, stored, and cooled their own server racks to achieve massive computing powers. Now, with the rise of GPU Cloud for AI, it has enabled and revolutionized high performance computing, allowing all-sized agencies to rent supercomputer-level power by the minute.

Coming to the main part. How are companies translating raw cloud compute into business value? In this article, let’s break down this question and identify how modern businesses are leveraging an Enterprise GPU Cloud that is helping them scale operations, cut costs, and dominate their industries.

What is a GPU Cloud and Why Does AI Need It?

Graphics Processing Units, or GPUs, are made for parallel processing, meaning they can handle multiple tasks at the same time. This is how they vary from Central Processing Units or CPUs, which handle tasks sequentially or one task after the other. GPUs contain thousands of smaller cores that can handle multiple operations simultaneously. This makes them the perfect engine for the massive matrix multiplications required in machine learning and deep learning.

An Enterprise GPU Cloud provides on-demand access to these powerful chips, like NVIDIA or H100s, and many more, hosted in remote data centers. Instead of a multi-million-dollar capital expenditure to build an on-premise server room, businesses shift to an operational expenditure model, paying only for the compute time they actually use.

Why Enterprise GPU Cloud Beats On-Premise (The ROI Factor)

Many cloud providers focus on raw speed and often miss financial and operational agility as the ultimate business source point. Here is why AI Infrastructure for Enterprises is rapidly moving to the cloud:

- Instant Scalability: Training an AI model might take 50 GPUs running for a week, but deploying that model for daily inference might only require 2 GPUs. Scalable AI Computing in the cloud lets you spin resources up and down instantly, meaning you never pay for idle hardware.

- Access to the Latest Hardware: The hardware lifecycle in AI is brutally fast. An on-premise server bought today will be obsolete in two years. Cloud providers continuously update their fleets with the latest NVIDIA and AMD architectures, keeping your business at the cutting edge.

- Reduced Maintenance Burden: Your engineering team should be building AI, not replacing faulty cooling fans. Cloud infrastructure offloads hardware maintenance, physical security, and power management to the provider.

Industry AI Use Cases: How GPU Cloud Powers Real Business?

So, what are companies actually building with all this compute? Here are the top Industry AI Use Cases driving the adoption of cloud GPUs.

1. Generative AI and Intelligent Chatbots

Nowadays, the most common use of GPU Cloud for Business is running a generative AI engine. Many companies are building chatbots that are customer-centric, understand the context, tone, and complex catalogues. These cloud GPUs have low-latency interference so that the chatbots reply in real time, which doesn’t frustrate the users.

2. Healthcare and Life Sciences

Speed and time are major assets when it comes to saving lives in healthcare systems. Pharmaceutical companies are also modernizing with the help of scalable AI computing. This helps stimulate molecular interactions for drug discovery and significantly reduces the research period from years to months. Similarly, hospitals are also using GPU-accelerated computer vision for medical imaging, such as MRIs and X-rays, which helps to detect anomalies at an early stage with accuracy far superior to the human eye.

3. Financial Services and Fraud Detection

As we know, banks and trading firms process millions of transactions per second. This is possible through the use of a GPU cloud, which can run complex and deep learning models to detect real-time fraudulent patterns and block suspicious transactions instantly. It also helps to run high-frequency algorithmic trading strategies that rely on split-second market predictions.

4. Retail and Hyper-Personalization

Even E-commerce giants rely on GPU clusters to power their search and recommendation engines. These clusters analyze a user’s browsing history, demographic data, and purchase behaviour between millions of products to recommend hyper personalized products that increase the conversion rate of the website.

Choosing the Right GPU Tier for Your Workload

A major mistake businesses make is over-provisioning. Not every AI application requires the most expensive hardware. Understanding which GPU to rent is crucial for cost management:

- Heavy Training (LLMs, Deep Learning): You need massive memory bandwidth. Look for flagship GPUs like the NVIDIA H100 or the B200. They are expensive per hour, but they chew through massive datasets so fast that your overall training bill is actually lower.

- Inference and Fine-Tuning: If you are taking an existing model and adapting it, mid-tier GPUs like the A100 or RTX 6000 Ada Generation offer the perfect sweet spot between price and performance.

- Edge AI and Basic Inference: For running smaller, localized models or standard computer vision tasks, highly efficient GPUs like the NVIDIA L4 or T4 are incredibly cost-effective.

By matching the workload to the right hardware, companies can cut their cloud AI bills by up to 40%.

Security and Compliance in the AI Cloud

A critical factor that is often overlooked is data sovereignty and security. When you feed company data or customer information into an AI model, security becomes the most essential element. For this sole purpose, modern GPU cloud providers offer private networking with dedicated bare-metal GPU servers and strict compliance certifications. For bigger and enterprise-driven companies, these isolated cloud environments don’t breach data with AI innovation.

The Future of AI is in the Cloud

The barrier to entry for building enterprise-grade artificial intelligence is lower than it has ever been. By leveraging a GPU Cloud for Business, your team gets the agility and raw horsepower required to actually compete in today’s market.

So, free yourself from the worry of server racks, cooling bills, and hardware depreciation, and start focusing on building AI models that solve your customers’ problems.

Ready to scale your enterprise’s AI capabilities without breaking the bank? Discover the latest infrastructure strategies, architecture insights, and optimization tools at Solidus AI Tech.

FAQs

-

How does GPU cloud work?

Cloud GPUs enhance real-time simulations by providing low latency and high-throughput processing, which is essential for smooth and realistic experiences. This is particularly important for applications such as gaming, virtual reality, and autonomous vehicle testing, where performance and responsiveness are critical.

-

How do companies use GPUs?

GPU computing enables fast parallel processing of large datasets, essential for real-time analytics. Time-sensitive applications, such as fraud detection, predictive maintenance, and video analytics, benefit from low-latency processing.

-

What is a GPU cloud for AI?

A GPU cloud is a remote computing environment that provides on-demand access to high-performance Graphics Processing Units. It allows businesses to train, test, and run artificial intelligence and machine learning models without purchasing physical hardware.

-

Why is GPU better than CPU for AI?

GPUs are built for parallel processing, meaning they can handle thousands of simultaneous calculations at once. This is essential for the complex math required by neural networks and AI algorithms, making GPUs much faster than CPUs.

-

What is scalable AI computing?

Scalable AI computing refers to the ability to instantly increase or decrease computing resources based on the current demands of your AI workload.